20 Detecting Cycles

20.1 A Running Example

import lists as L

data Pair: p(hd, ref tl) end

p0 = p(0, 0)

p1 = p(1, 1)

p2 = p(2, 3)

p3 = p(3, 4)

p2!{tl: p3}

p3!{tl: p2}

p4 = p(4, p3)

p5 = p(5, p4)

p6 = p(6, "dummy")

p6!{tl: p6}Do Now!

Sketch out the above pairs to make sure you see all the cycles.

So we have two that participate in no cyclic behavior (p0 and

p1), two (p2 and p3 that are mutually-cyclic, one

(p6) that is a self-cycle, and two (p4 and p5)

that lead to a cycle.

20.2 Types

tl, but it’s not clear what this can be:

sometimes it’s a Number, and other times it’s a

Pair. However, we might observe that if our goal is to

create cyclic data, then we want tl to refer to a Pair

or to nothing at all. That suggests that a useful type is:

data Pair: p(hd :: Number, ref tl :: Option<Pair>) endp0 = p(0, none)

p1 = p(1, none)

p2 = p(2, none)

p3 = p(3, none)

p2!{tl: some(p3)}

p3!{tl: some(p2)}

p4 = p(4, some(p3))

p5 = p(5, some(p4))

p6 = p(6, none)

p6!{tl: some(p6)}Option

everywhere. Since our goal is to focus on cycles, and this would

become unwieldy, we ignore the typed version from now on.20.3 A First Checker

Okay, back to the untyped version.

cc :: Pair -> Booleancc stands for “check cycle”.Critically, it’s important that this be a total function: i.e., it always terminates.

fun cc(e):

fun loop(cur, hist):

if is-p(cur):

if L.member-identical(hist, cur):

true

else:

loop(cur!tl, link(cur, hist))

end

else:

false

end

end

loop(e, empty)

endcheck:

cc(p0) is false

cc(p1) is false

cc(p2) is true

cc(p3) is true

cc(p4) is true

cc(p5) is true

cc(p6) is true

endcheck:

p0 violates cc

p2 satisfies cc

endcc is a desirable property, so p2 is

a “good” instance and p0 is a “bad” one. However, cc

is not a judgment of quality—20.4 Complexity

Now that we have determined that it terminates, we can ask for its

time and space complexity. First we have to decide what we are even

computing the complexity over. If the sequence is finite, then the

size is clearly the size of the sequence. But if it’s infinite, we

don’t want to traverse the “whole thing”: rather, we mean its finite

part (excluding any repetition). So the meaningful measure in either

case is the number of p nodes, i.e., the finite size. It may

just be that some of these lead back to themselves, so that a naïve

traversal will go on forever.

Okay, so we visit each node once. We keep track of all the nodes just in case we double back over, either until we run out of nodes or we repeat one. Therefore, the space complexity is linear in the length of the sum of the prefix (from the starting node) and the cycle. The time complexity is that but also, at each point, we have to check membership, so it’s quadratic in the length of that prefix + cycle. So: linear space, quadratic time, in the size of the prefix + cycle.

Now, some degree of linear behavior is unavoidable: we clearly have to keep going until we run out or hit a cycle, so for detecting the cycle having something be linear in the size of the prefix (get it out of the way) + length of the cycle (find the cycle) seems essential. But can we improve on this complexity? It seems unlikely: by definition, how can we check for a cycle if we don’t remember everything we’ve seen?

Our first hunch might be, “Maybe there’s another space-time tradeoff!” But it’s not so clear here. Our space is linear and time quadratic, so we may think we can flip those around. But the time can’t be less than the space! If, for instance, we had linear time and quadratic space, that wouldn’t make sense, because we’d need at least quadratic time just to fill the space. So that’s not going to work so well.

Instead, the best way to improve seems to have a better lookup data

structure. We’d still take linear space—

20.5 A Fabulous Improvement

It turns out we can do a lot better! It’s called the tortoise-and-hare algorithm.

We start off with two references into the sequence, one called the tortoise and the other the hare.

At each step, the tortoise tries to advance by one node. If it cannot, we’ve run out of sequence, and we’re done. The hare, being a hare and not a tortoise, tries to advance by two nodes. Again, if it cannot, we’ve run out of sequence, and we’re done. Otherwise both advance, and check if they’re at the same place. If they are, because they started out being at distinct nodes, we’ve found a cycle! If they are not, then we iterate.

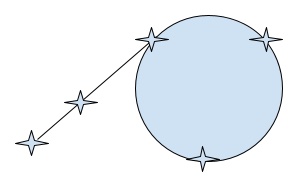

Why does this even work? In the finite case it’s clear, because the hare will run out of next nodes. We only have to think about the infinite case. There, in general, we have this kind of situation:

There is some prefix of nodes, followed by a cycle. Now, we don’t know

how long the prefix is, so we don’t know how far ahead of the tortoise

the hare is. Nevertheless, there is some first point at which the

tortoise enters the cycle. (There must be, because the tortoise always

makes progress, and the prefix can only be finite.) From this point

on, we know that on each step, the relative speed of the two animals

is 1. That means the hare “gains” 1 on the tortoise every step. We

can see that eventually, the hare must catch up with the

tortoise—

Now let’s analyze this. The tortoise will get caught by the time it has completed one loop of the cycle. Because the tortoise moves one step at a time, the total time is the length of the prefix + length of the loop. In terms of space, however, we no longer need any history at all; we only need the current positions of the tortoise and hare. Therefore, our time complexity is linear, but the space complexity is now significantly smaller: down to constant!

fun th(e):

fun loop(tt, hr):

if tt <=> hr:

true

else:

if is-p(tt) and is-p(hr):

new-tt = tt!tl

int-hr = hr!tl

if is-p(int-hr):

new-hr = int-hr!tl

loop(new-tt, new-hr)

else:

false

end

else:

false

end

end

end

loop(e, e!tl)

end20.6 Testing

check:

cc(p0) is false

cc(p2) is true

endcc replaced by ph), we should instead write them as follows:

check:

cc(p0) is th(p0)

cc(p1) is th(p1)

cc(p2) is th(p2)

cc(p3) is th(p3)

cc(p4) is th(p4)

cc(p5) is th(p5)

cc(p6) is th(p6)

endThis confers two advantages. First, if we change the example, we don’t

have to update two tests, only one. But the much more important reason

is that we intend for pr to be an optimized version of

cc. That is, we expect the two to produce the same result. We

can think of cc as our clear, reference implementation. That

is, this is another instance of model-based testing.

As an aside, this algorithm is not exactly what Pyret does, because we need to check for arbitrary graph-ness, not just cycles. It’s also complicated due to user-defined functions, etc.