15.1 Sharing and Equality

15.1.1 Re-Examining Equality

data BinTree:

| leaf

| node(v, l :: BinTree, r :: BinTree)

end

a-tree =

node(5,

node(4, leaf, leaf),

node(4, leaf, leaf))

b-tree =

block:

four-node = node(4, leaf, leaf)

node(5,

four-node,

four-node)

endb-tree

is morally equivalent to how we’ve written a-tree, but we’ve

created a helpful binding to avoid code duplication.a-tree and b-tree are bound to trees with

5 at the root and a left and right child each containing

4, we can indeed reasonably consider these trees

equivalent. Sure enough:

check:

a-tree is b-tree

a-tree.l is a-tree.l

a-tree.l is a-tree.r

b-tree.l is b-tree.r

endHowever, there is another sense in which these trees are not

equivalent. concretely, a-tree constructs a distinct node for

each child, while b-tree uses the same node for both

children. Surely this difference should show up somehow, but we

have not yet seen a way to write a program that will tell these

apart.

is operator uses the same equality test as

Pyret’s ==. There are, however, other equality tests in

Pyret. In particular, the way we can tell apart these data is by using

Pyret’s identical function, which implements

reference equality. This checks not only whether two

values are structurally equivalent but whether they are

the result of the very same act of value construction.

With this, we can now write additional tests:

check:

identical(a-tree, b-tree) is false

identical(a-tree.l, a-tree.l) is true

identical(a-tree.l, a-tree.r) is false

identical(b-tree.l, b-tree.r) is true

endLet’s step back for a moment and consider the behavior that gives us this

result. We can visualize the different values by putting each distinct value

in a separate location alongside the running program. We can draw the

first step as creating a node with value 4:

a-tree = node(5, 1001, node(4, leaf, leaf)) b-tree = block: four-node = node(4, leaf, leaf) node(5, four-node, four-node) end

Heap

- 1001:

node(4, leaf, leaf)

The next step creates another node with value 4, distinct from the

first:

a-tree = node(5, 1001, 1002) b-tree = block: four-node = node(4, leaf, leaf) node(5, four-node, four-node) end

Heap

- 1001:

node(4, leaf, leaf) - 1002:

node(4, leaf, leaf)

Then the node for a-tree is created:

a-tree = 1003 b-tree = block: four-node = node(4, leaf, leaf) node(5, four-node, four-node) end

Heap

- 1001:

node(4, leaf, leaf) - 1002:

node(4, leaf, leaf) - 1003:

node(5, 1001, 1002)

When evaluating the block for b-tree, first a single node is

created for the four-node binding:

a-tree = 1003 b-tree = block: four-node = 1004 node(5, four-node, four-node) end

Heap

- 1001:

node(4, leaf, leaf) - 1002:

node(4, leaf, leaf) - 1003:

node(5, 1001, 1002) - 1004:

node(4, leaf, leaf)

These location values can be substituted just like any other, so they get

substituted for four-node to continue evaluation of the

block.We skipped substituting a-tree for the moment, that

will come up later.

a-tree = 1003 b-tree = block: node(5, 1004, 1004) end

Heap

- 1001:

node(4, leaf, leaf) - 1002:

node(4, leaf, leaf) - 1003:

node(5, 1001, 1002) - 1004:

node(4, leaf, leaf)

Finally, the node for b-tree is created:

a-tree = 1003 b-tree = 1005

Heap

- 1001:

node(4, leaf, leaf) - 1002:

node(4, leaf, leaf) - 1003:

node(5, 1001, 1002) - 1004:

node(4, leaf, leaf) - 1005:

node(5, 1004, 1004)

This visualization can help us explain the test we wrote using identical.

Let’s consider the test with the appropriate location references substituted

for a-tree and b-tree:

check: identical(1003, 1005) is false identical(1003.l, 1003.l) is true identical(1003.l, 1003.r) is false identical(1005.l, 1005.r) is true end

Heap

- 1001:

node(4, leaf, leaf) - 1002:

node(4, leaf, leaf) - 1003:

node(5, 1001, 1002) - 1004:

node(4, leaf, leaf) - 1005:

node(5, 1004, 1004)

check: identical(1003, 1005) is false identical(1001, 1001) is true identical(1001, 1004) is false identical(1004, 1004) is true end

Heap

- 1001:

node(4, leaf, leaf) - 1002:

node(4, leaf, leaf) - 1003:

node(5, 1001, 1002) - 1004:

node(4, leaf, leaf) - 1005:

node(5, 1004, 1004)

is operator can also be parameterized by a different equality

predicate than the default ==. Thus, the above block can

equivalently be written as:We can use is-not

to check for expected failure of equality.

check:

a-tree is-not%(identical) b-tree

a-tree.l is%(identical) a-tree.l

a-tree.l is-not%(identical) a-tree.r

b-tree.l is%(identical) b-tree.r

endcheck:

a-tree is b-tree

a-tree is-not%(identical) b-tree

a-tree.l is a-tree.r

a-tree.l is-not%(identical) a-tree.r

endidentical really means

[Understanding Equality]

(Pyret has a full range of equality operations suitable for different situations).Exercise

There are many more equality tests we can and should perform even with the basic data above to make sure we really understand equality and, relatedly, storage of data in memory. What other tests should we conduct? Predict what results they should produce before running them!

15.1.2 The Cost of Evaluating References

From a complexity viewpoint, it’s important for us to understand how

these references work. As we have hinted, four-node is computed

only once, and each use of it refers to the same value: if, instead,

it was evaluated each time we referred to four-node, there

would be no real difference between a-tree and b-tree,

and the above tests would not distinguish between them.

L = range(0, 100)L1 = link(1, L)

L2 = link(-1, L)L to be

considerably more than that for a single link

operation. Therefore, the question is how long it takes to compute

L1 and L2 after L has been computed: constant

time, or time proportional to the length of L?L is computed once and bound to L; subsequent

expressions refer to this value (hence “reference”)

rather than reconstructing it, as reference equality shows:

check:

L1.rest is%(identical) L

L2.rest is%(identical) L

L1.rest is%(identical) L2.rest

endL to it, and see

whether the resulting argument is identical to the original:

fun check-for-no-copy(another-l):

identical(another-l, L)

end

check:

check-for-no-copy(L) is true

endcheck:

L satisfies check-for-no-copy

end.rest) nor

user-defined ones (like check-for-no-copy) make copies of their

arguments.Strictly speaking, of course, we cannot

conclude that no copy was made. Pyret could have made a copy,

discarded it, and still passed a reference to the original. Given how

perverse this would be, we can assume—15.1.3 Notations for Equality

Until now we have used == for equality. Now we have learned that it’s

only one of multiple equality operators, and that there is another one called

identical. However, these two have somewhat subtly different syntactic

properties. identical is a name for a function, which can

therefore be used to refer to it like any other function (e.g., when we need to

mention it in a is-not clause). In contrast, == is a binary

operator, which can only be used in the middle of expressions.

identical and a function name equivalent of

==. They do, in fact, exist! The operation performed by == is

called equal-always. Therefore, we can write the first block of tests

equivalently, but more explicitly, as

check:

a-tree is%(equal-always) b-tree

a-tree.l is%(equal-always) a-tree.l

a-tree.l is%(equal-always) a-tree.r

b-tree.l is%(equal-always) b-tree.r

endidentical is <=>.

Thus, we can equivalently write check-for-no-copy as

fun check-for-no-copy(another-l):

another-l <=> L

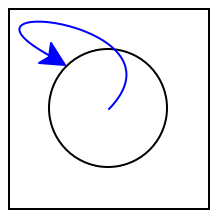

end15.1.4 On the Internet, Nobody Knows You’re a DAG

Despite the name we’ve given it, b-tree is not actually a

tree. In a tree, by definition, there are no shared nodes,

whereas in b-tree the node named by four-node is shared

by two parts of the tree. Despite this, traversing b-tree will

still terminate, because there are no cyclic references in it:

if you start from any node and visit its “children”, you cannot end

up back at that node. There is a special name for a value with such a

shape: directed acyclic graph (DAG).

Many important data structures are actually a DAG underneath. For instance, consider Web sites. It is common to think of a site as a tree of pages: the top-level refers to several sections, each of which refers to sub-sections, and so on. However, sometimes an entry needs to be cataloged under multiple sections. For instance, an academic department might organize pages by people, teaching, and research. In the first of these pages it lists the people who work there; in the second, the list of courses; and in the third, the list of research groups. In turn, the courses might have references to the people teaching them, and the research groups are populated by these same people. Since we want only one page per person (for both maintenance and search indexing purposes), all these personnel links refer back to the same page for people.

data Content:

| page(s :: String)

| section(title :: String, sub :: List<Content>)

endpeople-pages :: Content =

section("People",

[list: page("Church"),

page("Dijkstra"),

page("Hopper") ])fun get-person(n): get(people-pages.sub, n) endtheory-pages :: Content =

section("Theory",

[list: get-person(0), get-person(1)])

systems-pages :: Content =

section("Systems",

[list: get-person(1), get-person(2)])site :: Content =

section("Computing Sciences",

[list: theory-pages, systems-pages])check:

theory = get(site.sub, 0)

systems = get(site.sub, 1)

theory-dijkstra = get(theory.sub, 1)

systems-dijkstra = get(systems.sub, 0)

theory-dijkstra is systems-dijkstra

theory-dijkstra is%(identical) systems-dijkstra

end15.1.5 It’s Always Been a DAG

What we may not realize is that we’ve actually been creating a DAG for longer

than we think. To see this, consider a-tree, which very clearly seems to

be a tree. But look more closely not at the nodes but rather at the

leaf(s). How many actual leafs do we create?

leaf:

the data definition does not list any fields, and when constructing a

BinTree value, we simply write leaf, not (say)

leaf(). Still, it would be nice to know what is happening behind the

scenes. To check, we can simply ask Pyret:

check:

leaf is%(identical) leaf

endleaf <=> leaf here, because

that is just an expression whose result is ignored. We have to write is

to register this as a test whose result is checked and reported.

and this check passes. That is, when we write a variant without any

fields, Pyret automatically creates a singleton: it makes just one

instance and uses that instance everywhere. This leads to a more efficient

memory representation, because there is no reason to have lots of distinct

leafs each taking up their own memory. However, a subtle consequence of

that is that we have been creating a DAG all along.leaf to be distinct, it’s easy: we can write

data BinTreeDistinct:

| leaf()

| node(v, l :: BinTreeDistinct, r :: BinTreeDistinct)

endleaf function everywhere:

c-tree :: BinTreeDistinct =

node(5,

node(4, leaf(), leaf()),

node(4, leaf(), leaf()))check:

leaf() is-not%(identical) leaf()

end15.1.6 From Acyclicity to Cycles

web-colors = link("white", link("grey", web-colors))map2(color-table-row, table-row-content, web-colors)color-table-row function to two arguments: the

current row from table-row-content, and the current color from

web-colors, proceeding in lockstep over the two lists.Unfortunately, there are many things wrong with this attempted definition.

Do Now!

Do you see what they are?

This will not even parse. The identifier

web-colorsis not bound on the right of the=.- Earlier, we saw a solution to such a problem: use

rec[Streams From Functions]. What happens if we writerec web-colors = link("white", link("grey", web-colors))instead?Exercise

Why does

recwork in the definition ofonesbut not above? Assuming we have fixed the above problem, one of two things will happen. It depends on what the initial value of

web-colorsis. Because it is a dummy value, we do not get an arbitrarily long list of colors but rather a list of two colors followed by the dummy value. Indeed, this program will not even type-check.Suppose, however, thatweb-colorswere written instead as a function definition to delay its creation:fun web-colors(): link("white", link("grey", web-colors())) endOn its own this just defines a function. If, however, we use it—web-colors()—it goes into an infinite loop constructing links.Even if all that were to work,

map2would either (a) not terminate because its second argument is indefinitely long, or (b) report an error because the two arguments aren’t the same length.

When you get to cycles, even defining the datum becomes difficult because its definition depends on itself so it (seemingly) needs to already be defined in the process of being defined. We will return to cyclic data later in Cyclic Data, and to this specific example in Recursion and Cycles from Mutation.